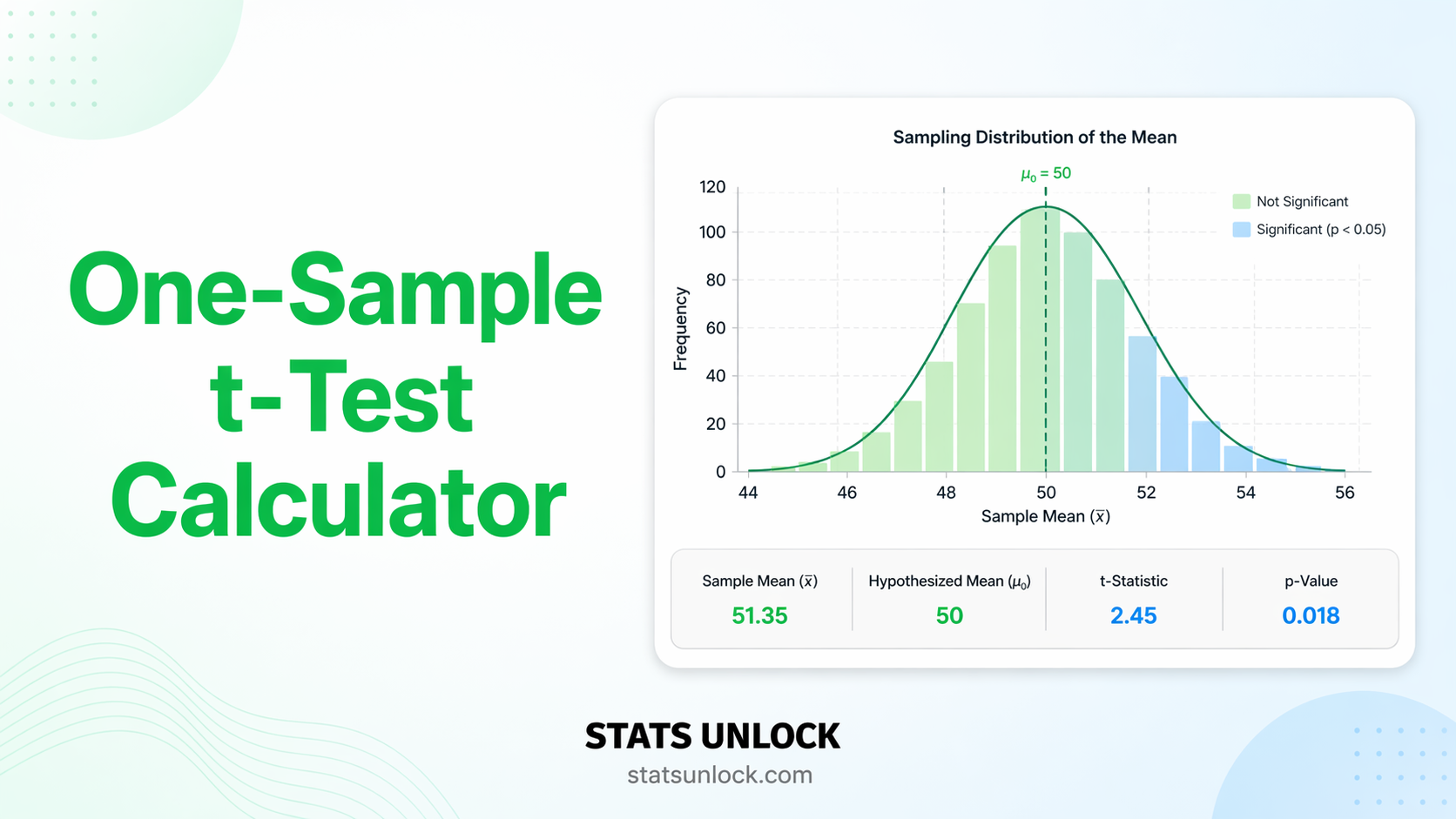

One-Sample t-Test Calculator

Test whether your sample mean differs significantly from a known or hypothesized population value (μ₀). Get t-statistic, p-value, Cohen's d, confidence interval, and five APA-ready reporting templates — free, no software needed.

| Value |

|---|

Formulas Used

Technical Notes

- Degrees of freedom: df = n − 1. One degree of freedom is lost because the sample mean (x̄) is used to estimate the population mean when computing s.

- Standard error (SE): SE = s / √n. As sample size increases, the SE shrinks, making the test more sensitive to small deviations from μ₀.

- Cohen's d for one-sample test: d = (x̄ − μ₀) / s. Unlike the two-sample d, there is no pooling — the single sample SD is used as the standardiser.

- p-value computation: Uses the t-distribution CDF with df = n − 1. For two-tailed tests, p = 2 × P(T > |t|).

- Normality: The Central Limit Theorem makes the test robust to non-normality when n ≥ 30. For smaller samples, visual inspection of a histogram or Q-Q plot is recommended.

- When to use z instead: If the population standard deviation (σ) is known exactly, use the one-sample z-test instead. In practice, σ is almost never known, so the t-test is appropriate.

Determine how many observations you need before collecting data, or check the power of your completed study. Enter any three values to compute the fourth.

Cohen's d Reference Table (One-Sample t-Test)

| Label | d | Meaning | Required n (α=.05, 80% power) |

|---|---|---|---|

| Small | 0.2 | Subtle deviation — requires a large sample to detect | 197 |

| Medium | 0.5 | Moderate deviation — detectable with a reasonable sample | 34 |

| Large | 0.8 | Obvious deviation from μ₀ — detectable with small samples | 15 |

| Very Large | 1.2 | Dramatic deviation — clear even without formal testing | 8 |

The one-sample t-test answers: "Is my sample mean consistent with a known or assumed population value?" It compares a single group's mean to a fixed benchmark — not to another group's mean.

Decision Checklist

- ✅You have one group of continuous measurements

- ✅You have a specific known or hypothesized value (μ₀) to test against

- ✅Your dependent variable is on an interval or ratio scale

- ✅Observations are independent of each other

- ✅Data are approximately normal, or n ≥ 30 (CLT applies)

- ❌Do NOT use if comparing two separate groups → use Independent Samples t-Test

- ❌Do NOT use if comparing pre and post measurements → use Paired t-Test

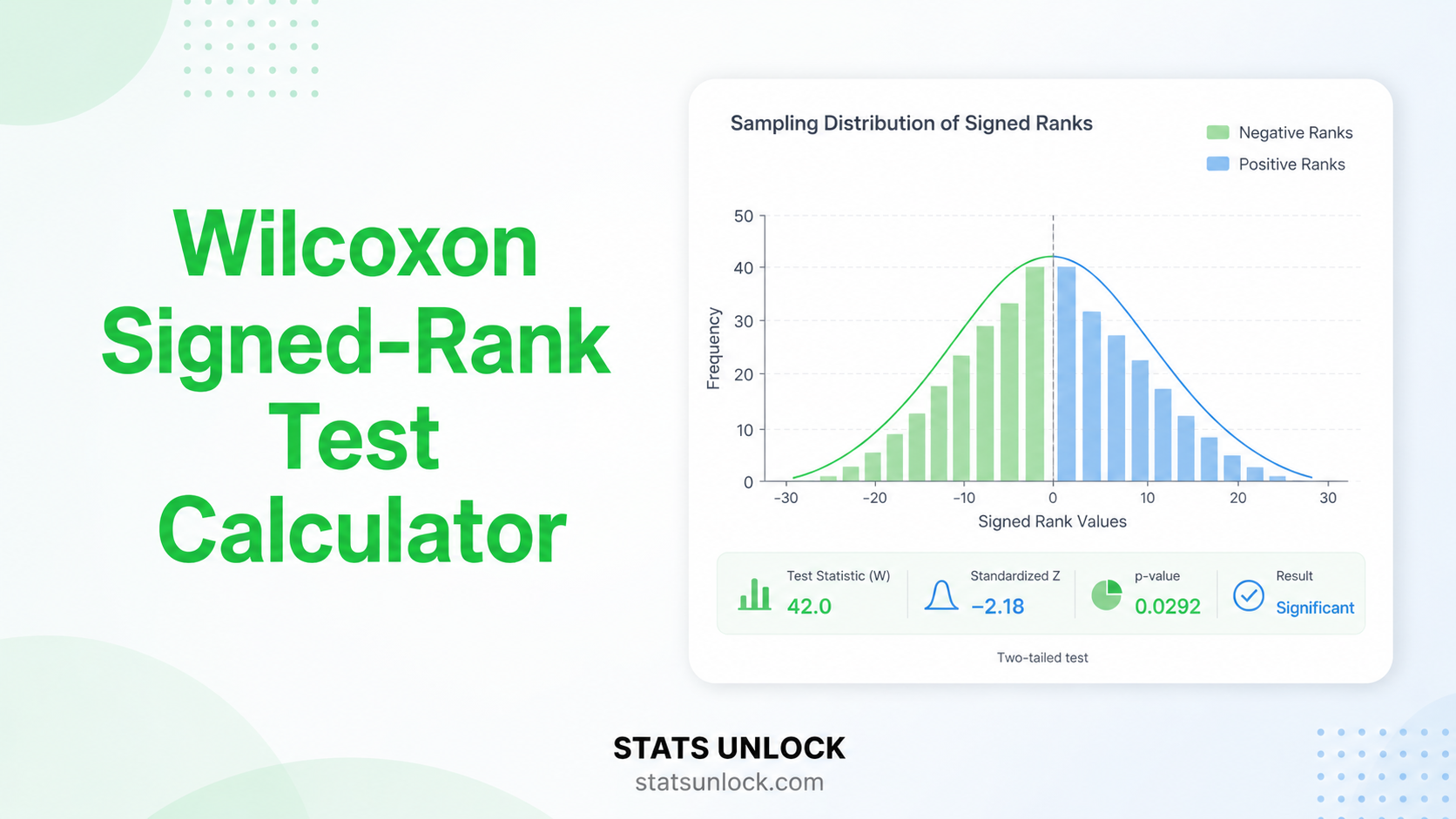

- ❌Do NOT use if data are ordinal or heavily skewed with small n → use Wilcoxon Signed-Rank Test

- ❌Do NOT use if population σ is known → use One-Sample z-Test

Real-World Examples

Testing whether the mean exam score of a class (M = 74.2) differs significantly from the national average of 70 to evaluate teaching effectiveness.

Testing whether a sample of patients' resting blood pressure (M = 128.4 mmHg) differs significantly from the healthy threshold of 120 mmHg.

Testing whether the mean weight of a production batch (M = 498.7 g) differs significantly from the target specification of 500 g.

Testing whether a sample's mean reaction time (M = 263 ms) differs significantly from a published normative value of 250 ms.

Related Tests — Decision Tree

72, 68, 75, 81, 66). The counter updates in real time.What is the one-sample t-test and when should I use it?

The one-sample t-test tests whether the mean of a single sample differs significantly from a known or hypothesized population value (μ₀). Use it when you have one continuous variable measured in one group and a specific benchmark to compare against — for example, testing whether a class average differs from a national norm, or whether a product weight differs from its specification.

What is the hypothesized mean (μ₀) and how do I choose it?

μ₀ (mu-zero) is the specific population mean your null hypothesis assumes. It should come from prior research, clinical guidelines, industry standards, or theoretical reasoning — NOT from your own data. Examples: national exam average (70%), clinical blood pressure threshold (120 mmHg), engineering specification (500 g), published reaction time norm (250 ms). If you do not have a meaningful μ₀, the one-sample t-test may not be the right test.

What is a p-value and how do I interpret it for this test?

The p-value is the probability of observing a sample mean as far from μ₀ as yours (or farther), assuming the null hypothesis is true (μ = μ₀). A p-value of 0.04 means: if the population mean really equals μ₀, there is only a 4% chance of getting a sample mean this extreme by random chance. It does NOT mean there is a 4% chance the null hypothesis is true.

What does Cohen's d mean for a one-sample t-test?

Cohen's d = (x̄ − μ₀) / s. It measures how many sample standard deviations the sample mean falls from μ₀. Benchmarks: d = 0.2 = small, d = 0.5 = medium, d = 0.8 = large (Cohen, 1988). Unlike the p-value, d does not depend on sample size — it tells you the practical magnitude of the deviation from μ₀, not just whether it reached significance.

What assumptions does the one-sample t-test require?

1. Independence: Each observation must be from a different, unrelated individual or unit. 2. Continuous DV: The variable must be on an interval or ratio scale. 3. Normality: The population distribution should be approximately normal. For n ≥ 30, the CLT makes the test robust to non-normality. For smaller samples, check with Q-Q plots or Shapiro-Wilk. 4. No extreme outliers: Outliers can heavily influence the mean and distort results in small samples.

How many observations do I need for a one-sample t-test?

Minimum: around 20–30 for normally distributed data. For 80% power to detect a medium effect (d = 0.5) at α = 0.05 (two-tailed), you need approximately 34 participants. For a small effect (d = 0.2), you need about 197. Use the Sample Size & Power Calculator on this page for exact values based on your expected effect size.

One-tailed or two-tailed? Which should I choose?

Two-tailed tests whether the mean differs from μ₀ in either direction. One-tailed (right or left) tests a specific direction only. Always choose two-tailed unless you pre-specified a directional hypothesis before collecting data, with a strong theoretical or prior-evidence basis. Choosing one-tailed after seeing the data to achieve a significant result is a form of p-hacking and inflates your Type I error rate.

How do I report one-sample t-test results in APA 7th edition format?

Format: "A one-sample t-test indicated that [DV] (M = ___, SD = ___) [did / did not] differ significantly from μ₀ = ___, t(df) = ___, p [</=] ___, d = ___. A 95% CI for the mean difference ranged from ___ to ___." Rules: italicise all symbols (t, p, M, SD, d); report p to 3 decimal places; write p < .001 not p = .000; always include effect size and CI.

What is the difference between the one-sample t-test and the one-sample z-test?

Both test whether a sample mean equals μ₀, but the z-test requires knowing the exact population standard deviation (σ). Since σ is almost never known in practice, the t-test is used instead — it estimates σ from the sample (s) and uses the t-distribution, which has heavier tails to account for that extra uncertainty. As n increases, the t-distribution approaches the normal distribution, making the two tests nearly identical for large samples.

What if my result is non-significant?

A non-significant result (p ≥ α) does not prove the population mean equals μ₀ — it only means the data do not provide enough evidence to conclude otherwise. Possible reasons: (1) The effect is genuinely small or absent. (2) The study was underpowered (check the power calculator above). (3) There is high within-sample variability. Consider reporting the observed effect size and confidence interval even when p ≥ α — they convey more information than the binary significant/non-significant decision.

The following references support the statistical methods used in this one-sample t-test calculator, covering effect size interpretation, p-value reporting, and best practices in hypothesis testing.

- Student [Gosset, W. S.]. (1908). The probable error of a mean. Biometrika, 6(1), 1–25. https://doi.org/10.1093/biomet/6.1.1

- Cohen, J. (1988). Statistical power analysis for the behavioral sciences (2nd ed.). Lawrence Erlbaum Associates.

- American Psychological Association. (2020). Publication manual of the American Psychological Association (7th ed.). https://doi.org/10.1037/0000165-000

- Field, A. (2018). Discovering statistics using IBM SPSS statistics (5th ed.). SAGE Publications.

- Gravetter, F. J., & Wallnau, L. B. (2021). Statistics for the behavioral sciences (10th ed.). Cengage Learning.

- Cumming, G. (2014). The new statistics: Why and how. Psychological Science, 25(1), 7–29. https://doi.org/10.1177/0956797613504966

- Lakens, D. (2013). Calculating and reporting effect sizes to facilitate cumulative science. Frontiers in Psychology, 4, 863. https://doi.org/10.3389/fpsyg.2013.00863

- Wasserstein, R. L., & Lazar, N. A. (2016). The ASA statement on p-values. The American Statistician, 70(2), 129–133. https://doi.org/10.1080/00031305.2016.1154108

- Sullivan, G. M., & Feinn, R. (2012). Using effect size — or why the P value is not enough. Journal of Graduate Medical Education, 4(3), 279–282. https://doi.org/10.4300/JGME-D-12-00156.1

- R Core Team. (2024). R: A language and environment for statistical computing. https://www.R-project.org/

- Virtanen, P., et al. (2020). SciPy 1.0: Fundamental algorithms for scientific computing in Python. Nature Methods, 17, 261–272. https://doi.org/10.1038/s41592-019-0686-2

- NIST/SEMATECH. (2013). e-Handbook of statistical methods. https://www.itl.nist.gov/div898/handbook/