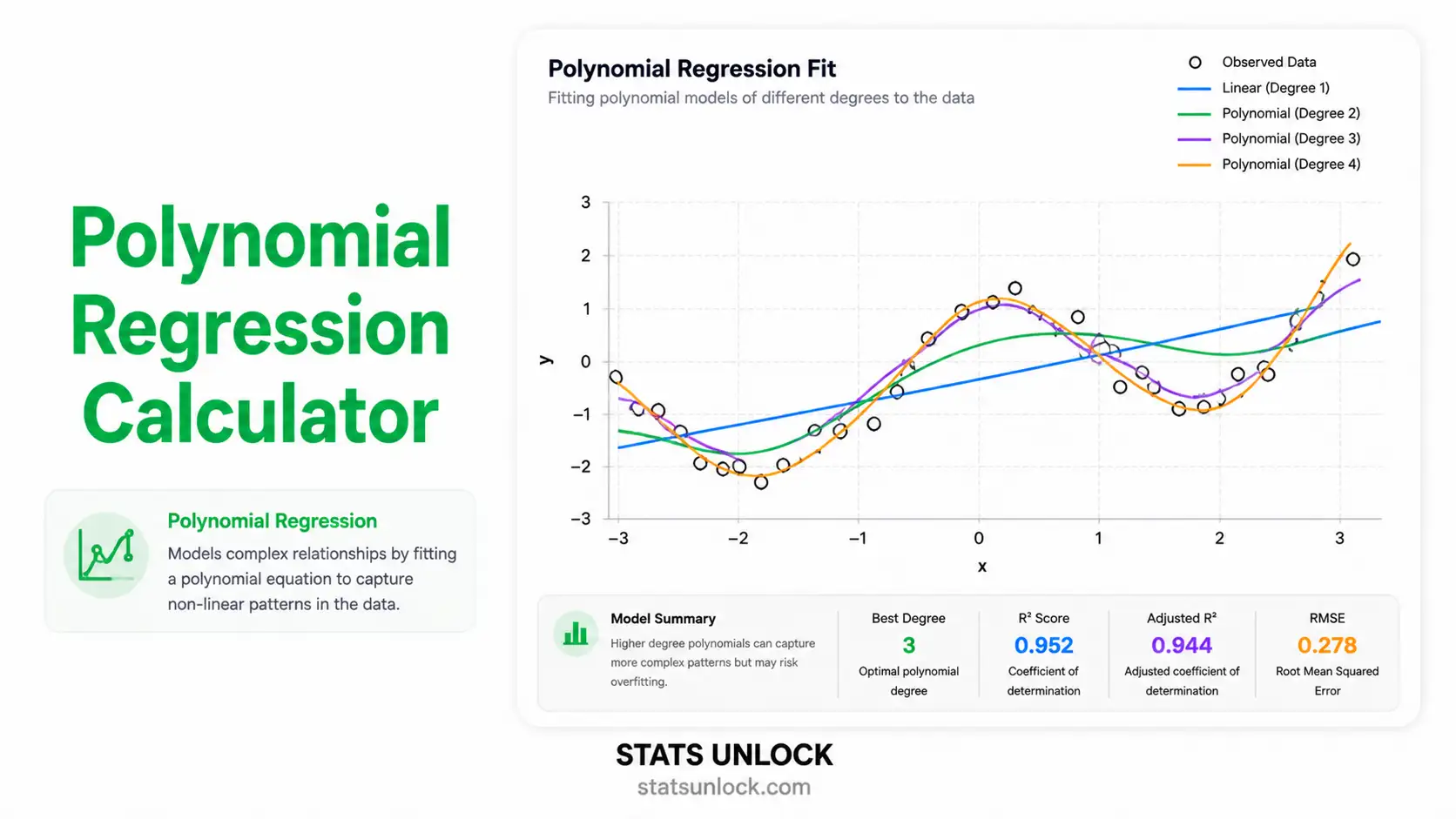

Polynomial

Regression Calculator

Fit quadratic, cubic, and higher-order polynomial curves to your data. Get the regression equation, R², adjusted R², F-statistic, p-value, AIC/BIC, residual diagnostics, and APA-ready results — instantly and free.

Paste your X (predictor) and Y (outcome) values, choose a sample dataset, upload a CSV/Excel file, or use manual entry. Comma-separated input is the default.

💡 Both lists must have the same length. Decimals (e.g., 1.23) are accepted.

Accepted: .csv .txt .xlsx .xls — first column = X, second column = Y.

A sample dataset is auto-loaded on first visit. Switching loads new X and Y values into the textareas.

📐 Technical Notes & Formulas

Sub-section A — Formulas Used

Sub-section B — Technical Notes

- Linear in parameters: Although the curve is non-linear in X, the model is linear in the βᵢ, so OLS applies directly.

- Multicollinearity: X, X², X³ … are highly correlated. Centre X (subtract mean) or use orthogonal polynomials to stabilise the fit.

- Overfitting: Higher degrees (p ≥ 5) almost always reduce SSres but rarely improve adjusted R² or BIC. Use BIC and CV to choose p honestly.

- Extrapolation: Predictions outside the observed X range are unsafe — polynomials swing wildly at the edges.

- Assumption checks: Residuals-vs-fitted (linearity / homoscedasticity), Q-Q plot (normality), Durbin-Watson (autocorrelation).

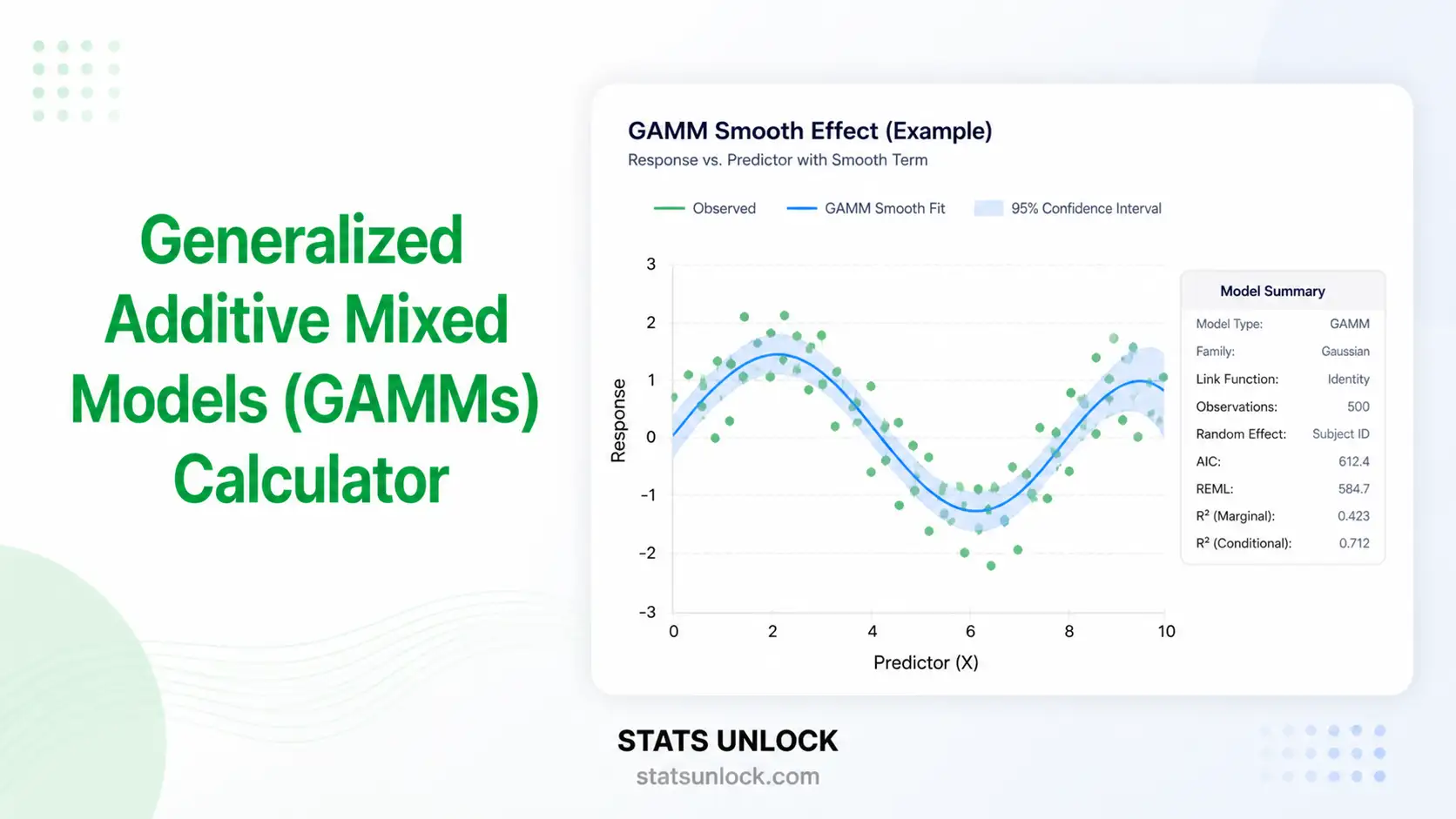

- Alternatives: If the curve is biologically meaningful, prefer a non-linear model (logistic, exponential, Michaelis-Menten). If you only need flexibility, splines or LOESS often outperform high-degree polynomials.

🎯 When to Use Polynomial Regression

This free polynomial regression calculator is designed for researchers, students, and analysts who need to fit a curved (non-linear) trend to two-variable data using ordinary least squares. It answers the question: "Does a polynomial of degree p fit my X-Y data better than a straight line, and how well?"

Decision Checklist

- Your X (predictor) is continuous

- Your Y (outcome) is continuous

- A scatter plot shows a clear curved pattern (U, inverted-U, S-shape, or wavy)

- You have at least 10–20 observations per polynomial term

- You want a flexible smoother for description, not a mechanistic model

- Do NOT use if a straight line already fits well — use simple linear regression

- Do NOT use if you have a known non-linear functional form — use non-linear regression instead

- Do NOT extrapolate beyond the observed range of X

Real-World Examples

- Agriculture / Ecology — Modelling crop yield as a function of fertiliser dose, where yield rises, plateaus, and falls (inverted-U).

- Biology / Physiology — Reaction time across the human lifespan: fast in young adults, slower in children and the elderly (U-shape, quadratic).

- Pharmacology — Drug response curves where therapeutic effect rises with dose, peaks, then drops at toxic levels (cubic).

- Economics / Marketing — Diminishing returns of advertising spend on revenue (concave quadratic).

- Wildlife Ecology — Animal activity over time of day (bimodal or sinusoidal-like patterns approximated by a quartic).

Sample Size Guidance

- Quadratic (p = 2): n ≥ 20

- Cubic (p = 3): n ≥ 30–40

- Quartic (p = 4): n ≥ 50–60

- Higher (p ≥ 5): n ≥ 100, and only if a clear scientific case exists

Decision Tree

Two continuous variables, X and Y

└─ Scatter plot is approximately linear?

├─ Yes → Simple Linear Regression

└─ No (curved)?

├─ Curve is biologically/physically known? → Non-linear Regression

├─ Smooth wavy curve, no theory? → Polynomial Regression (THIS TOOL)

└─ Highly local bumps? → Splines or LOESS

📚 How to Use This Polynomial Regression Calculator (10 Steps)

- Enter Your Data. Type or paste comma-separated X and Y values, upload a CSV/Excel file, or use the Manual Entry table. Example: X = "10, 15, 20, 25, …", Y = "8, 14, 22, 33, …".

- Choose a Sample Dataset. Five datasets are built-in — start with the Plant Growth vs. Temperature curve (auto-loaded) for an inverted-U shape.

- Configure the Polynomial. Choose degree (2–6), α (0.01 / 0.05 / 0.10), centring (recommended), and CI level (90 / 95 / 99 %).

- Run the Analysis. Click "▶ Run Polynomial Regression". Results stream in immediately.

- Read the Summary Cards. Green = significant; amber = borderline; red = not significant. R², adjusted R², F, and p are shown at a glance.

- Read the Full Output. The coefficients table reports each βᵢ with SE, t, and p. The ANOVA panel shows the global F-test.

- Examine Both Plots. The fitted polynomial curve shows the model overlay; the residuals-vs-fitted plot shows whether assumptions hold.

- Check Assumptions. Linearity-of-residuals, normality, homoscedasticity, and autocorrelation are auto-flagged with pass / warn / fail badges.

- Read the Interpretation. The dynamic interpretation paragraphs translate every number into plain English; the five writing-style cards generate APA, thesis, plain-language, abstract, and pre-registration text.

- Export Your Results. Download Doc (.txt) for fast pasting into a manuscript, Download PDF for a print-ready report.

❓ Frequently Asked Questions

Q1. What is polynomial regression?

Q2. When should I use polynomial regression instead of linear regression?

Q3. What polynomial degree should I choose?

Q4. How do I interpret R-squared in polynomial regression?

Q5. What does the polynomial regression equation look like?

Q6. How is polynomial regression different from non-linear regression?

Q7. What sample size do I need?

Q8. How do I report polynomial regression in APA format?

Q9. Can polynomial regression be used for prediction?

Q10. What are the assumptions of polynomial regression?

📖 References

The following references support the statistical methods used in this polynomial regression calculator, covering R-squared interpretation, p-value reporting, polynomial curve fitting, and best practices in regression analysis.

- Draper, N. R., & Smith, H. (1998). Applied regression analysis (3rd ed.). Wiley. https://doi.org/10.1002/9781118625590

- Kutner, M. H., Nachtsheim, C. J., Neter, J., & Li, W. (2005). Applied linear statistical models (5th ed.). McGraw-Hill/Irwin. Publisher page

- Cohen, J., Cohen, P., West, S. G., & Aiken, L. S. (2003). Applied multiple regression/correlation analysis for the behavioral sciences (3rd ed.). Routledge. https://doi.org/10.4324/9780203774441

- Faraway, J. J. (2014). Linear models with R (2nd ed.). CRC Press. https://doi.org/10.1201/b17144

- James, G., Witten, D., Hastie, T., & Tibshirani, R. (2021). An introduction to statistical learning (2nd ed.). Springer. https://doi.org/10.1007/978-1-0716-1418-1

- Akaike, H. (1974). A new look at the statistical model identification. IEEE Transactions on Automatic Control, 19(6), 716–723. https://doi.org/10.1109/TAC.1974.1100705

- Schwarz, G. (1978). Estimating the dimension of a model. The Annals of Statistics, 6(2), 461–464. https://doi.org/10.1214/aos/1176344136

- Belsley, D. A., Kuh, E., & Welsch, R. E. (1980). Regression diagnostics: Identifying influential data and sources of collinearity. Wiley. https://doi.org/10.1002/0471725153

- Royston, P., & Sauerbrei, W. (2008). Multivariable model-building: A pragmatic approach to regression analysis based on fractional polynomials. Wiley. https://doi.org/10.1002/9780470770771

- American Psychological Association. (2020). Publication manual of the American Psychological Association (7th ed.). https://doi.org/10.1037/0000165-000

- R Core Team. (2024). R: A language and environment for statistical computing. R Foundation for Statistical Computing. https://www.R-project.org/

- Virtanen, P., Gommers, R., Oliphant, T. E., et al. (2020). SciPy 1.0: Fundamental algorithms for scientific computing in Python. Nature Methods, 17, 261–272. https://doi.org/10.1038/s41592-020-0772-5

- NIST/SEMATECH. (2013). e-Handbook of statistical methods. National Institute of Standards and Technology. https://www.itl.nist.gov/div898/handbook/

- Harrell, F. E. Jr. (2015). Regression modeling strategies (2nd ed.). Springer. https://doi.org/10.1007/978-3-319-19425-7