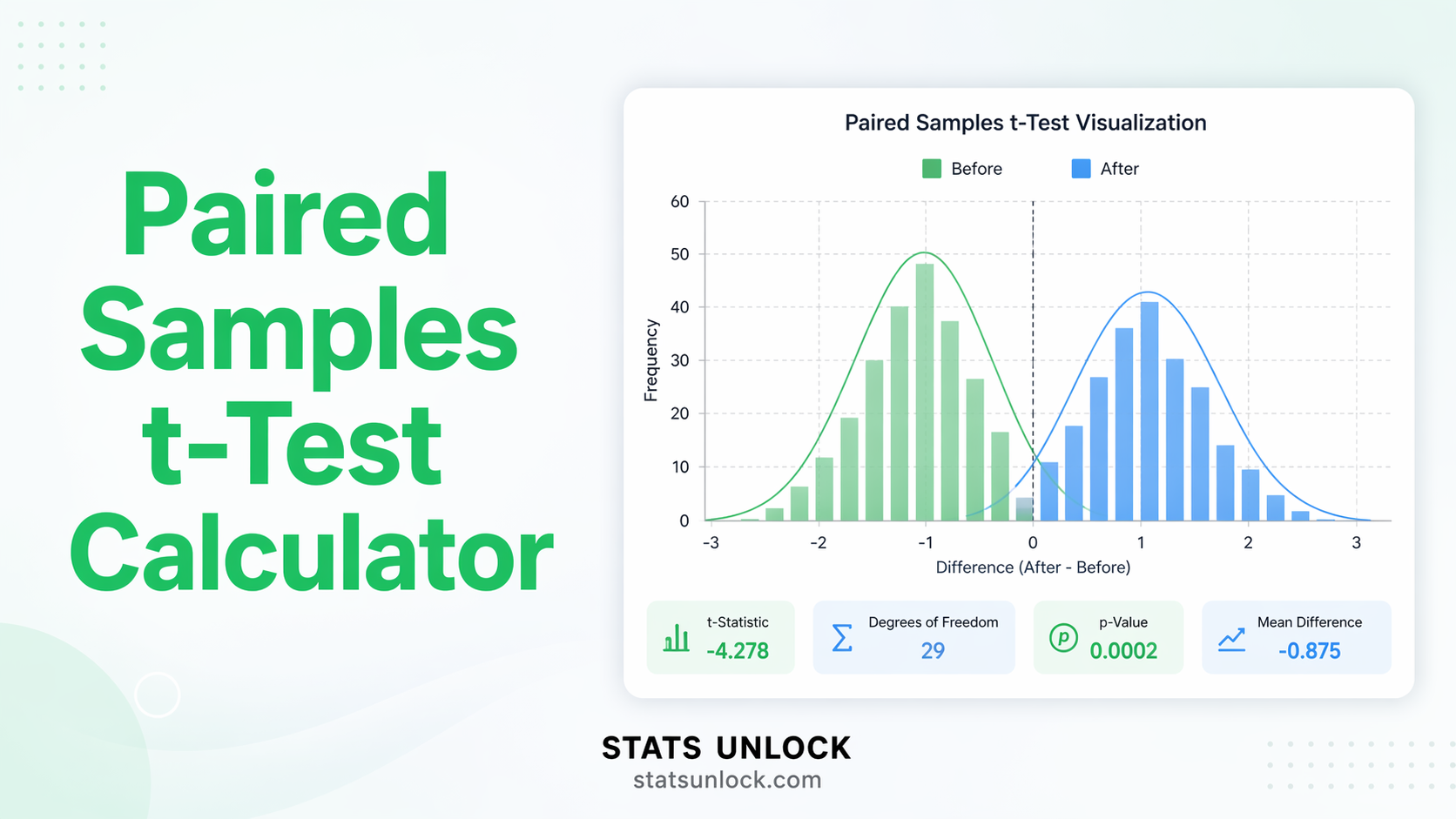

Paired Samples t-Test Calculator

Compare before-and-after or matched-pair measurements from the same subjects. Get t-statistic, p-value, Cohen's d, confidence interval, difference scores table, and five APA-ready reporting templates — free, no software needed.

| Time 1 (Before) | Time 2 (After) |

|---|

Paired Samples t-Test Formulas

Technical Notes

- Equivalence to one-sample t-test: The paired t-test is mathematically identical to running a one-sample t-test on the difference scores (dᵢ), testing H₀: μ_d = 0.

- Normality requirement: The assumption is that the difference scores are normally distributed — not the raw Time 1 or Time 2 scores individually. For n ≥ 30 pairs, the CLT applies.

- Cohen's d for paired designs: d = d̄ / s_d. This differs from the independent-samples Cohen's d (which uses pooled SD). The paired d can be larger than the independent d for the same raw data, because pairing removes between-subject variance from the denominator.

- Correlation bonus: The paired design is more powerful than independent samples when there is a positive correlation between the two measurements. The variance of the differences equals Var(X₂) + Var(X₁) − 2·Cov(X₁,X₂). Higher correlation → lower s_d → smaller SE → larger t.

- r effect size: r = t / √(t² + df). A supplementary measure useful when comparing across studies. Benchmarks: small ≈ 0.1, medium ≈ 0.3, large ≈ 0.5.

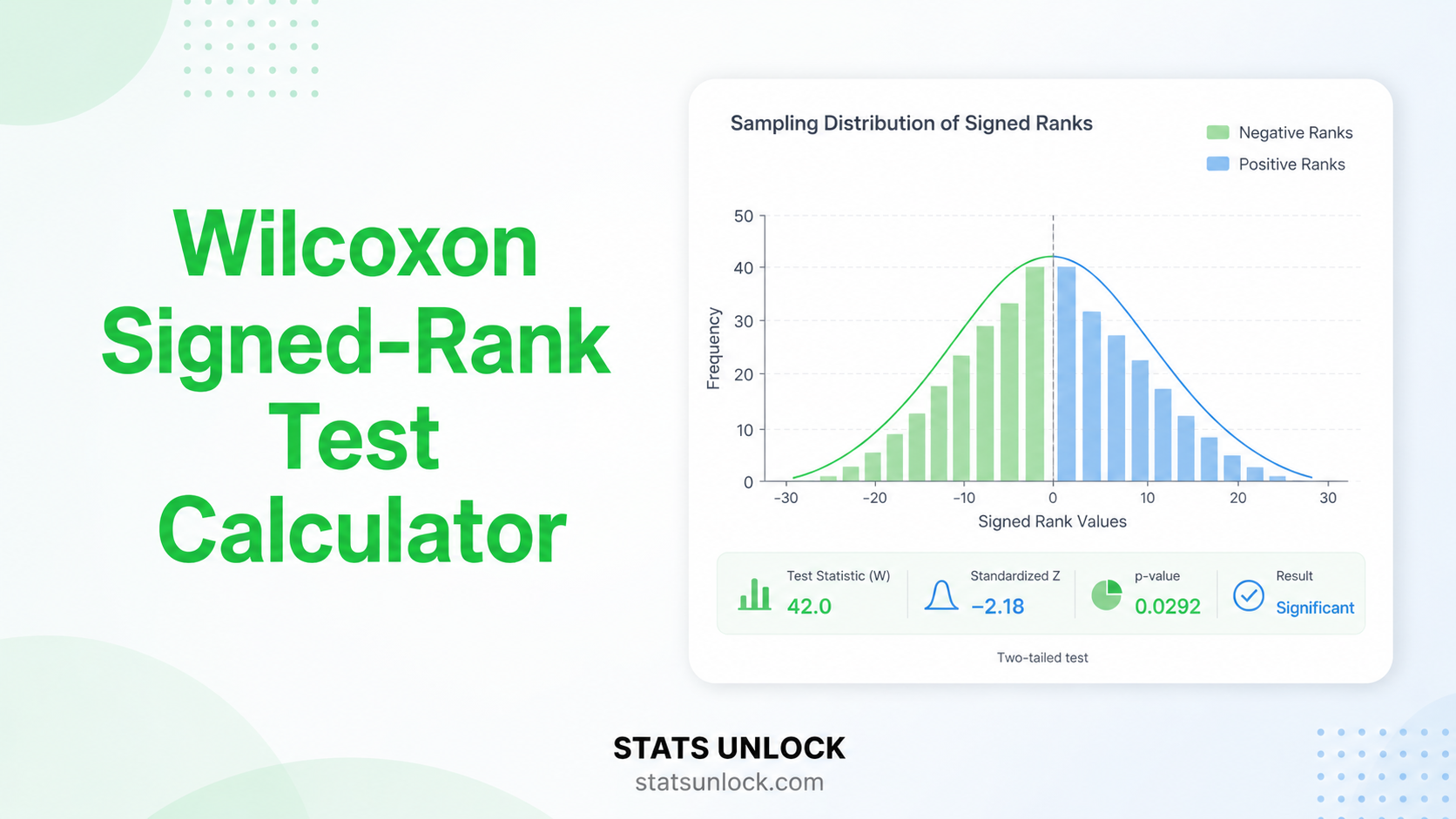

- Wilcoxon alternative: If difference scores are clearly non-normal (especially with n < 30), use the Wilcoxon Signed-Rank Test. It does not assume normality and is the standard non-parametric paired alternative.

Determine how many pairs you need before collecting data, or check the power of your completed study.

Cohen's d Reference (Paired t-Test)

| Label | d | Meaning | Required pairs (α=.05, 80% power) |

|---|---|---|---|

| Small | 0.2 | Subtle change — requires large sample to detect reliably | 197 |

| Medium | 0.5 | Moderate change — detectable with a reasonable n | 34 |

| Large | 0.8 | Obvious change — detectable even with small samples | 15 |

| Very Large | 1.2 | Dramatic change — clearly visible without statistics | 8 |

The paired samples t-test asks: "Did the measurements of the same participants (or matched pairs) change significantly between two conditions?" It controls for individual differences by focusing on within-subject change.

Decision Checklist

- ✅The same participants are measured twice (before/after, two conditions)

- ✅OR two separate but specifically matched individuals form pairs (e.g., twins, matched controls)

- ✅Your dependent variable is continuous (interval or ratio scale)

- ✅The difference scores (d = X₂ − X₁) are approximately normally distributed, or n ≥ 30 pairs

- ✅Pairs are independent of each other (one subject's change does not influence another's)

- ❌Do NOT use if groups are independent (different people) → use Independent Samples t-Test

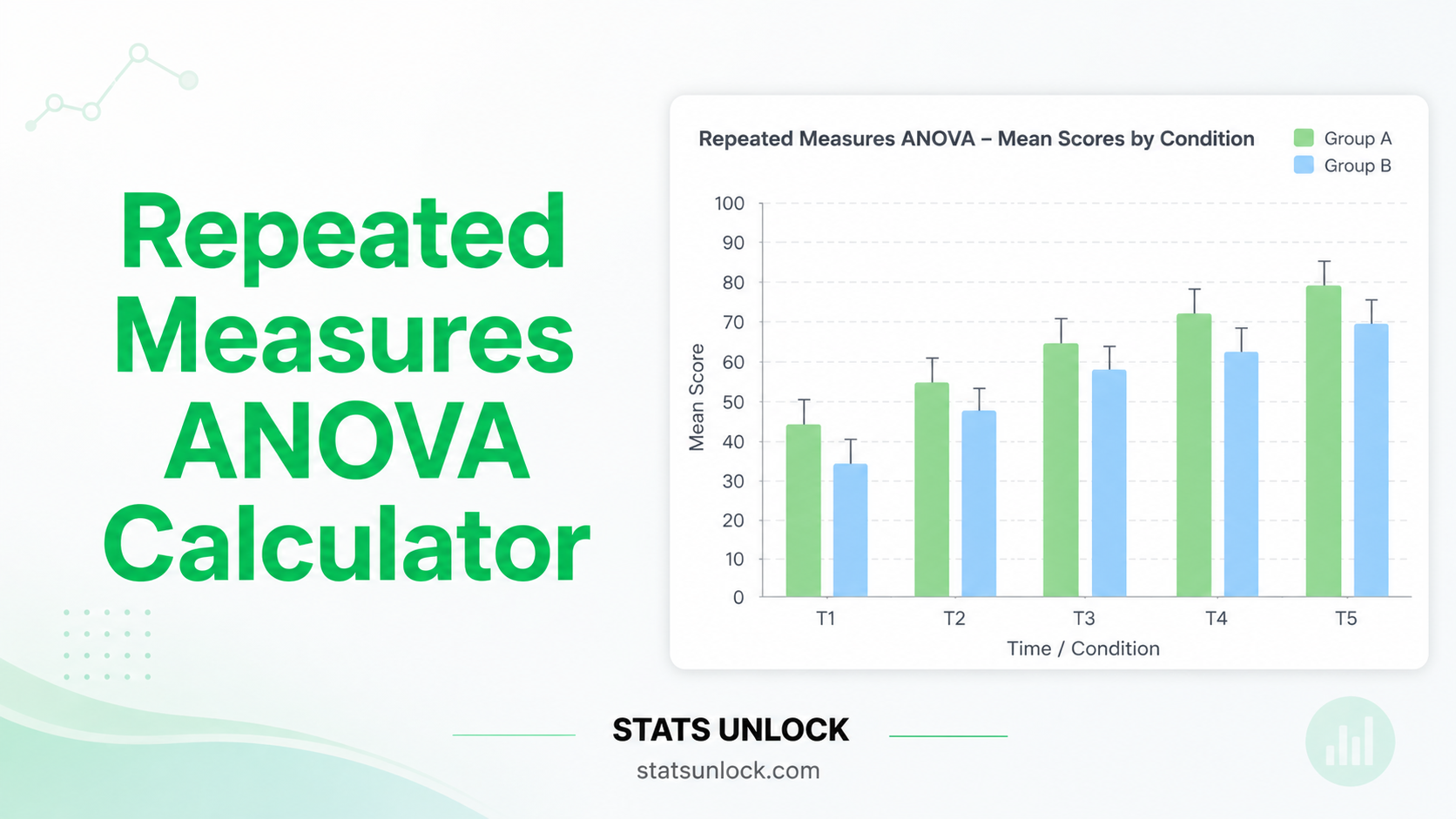

- ❌Do NOT use if you have 3+ time points or conditions → use Repeated-Measures ANOVA

- ❌Do NOT use if difference scores are clearly non-normal with small n → use Wilcoxon Signed-Rank Test

Real-World Examples

Comparing systolic blood pressure before and after a 4-week antihypertensive drug treatment in the same patients, to determine if the drug significantly reduces blood pressure.

Comparing student exam scores before and after a tutoring intervention, with the same students measured at both time points, to evaluate the tutoring program's effectiveness.

Measuring athletes' sprint times before and after a 6-week strength training program to determine whether the program significantly improves performance.

Comparing GAD-7 anxiety scores before and after a 10-week cognitive behavioural therapy program, testing whether therapy produces a statistically significant reduction in anxiety symptoms.

Related Tests — Decision Tree

What is the paired samples t-test and when should I use it?

The paired samples t-test compares the means of two related measurements from the same participants (or matched pairs). It tests whether the average within-subject change (mean difference) is significantly different from zero. Use it for before-and-after designs, repeated measures with two time points, or matched-pair studies where each observation in Condition 1 has a specific partner in Condition 2.

What is the difference between paired and independent samples t-tests?

The key distinction is the relationship between observations. In the paired test, each data point in Time 1 has a specific, meaningful partner in Time 2 (same person, or matched individual). In the independent test, the two groups have no such pairing. The paired test is more powerful when pairing effectively reduces variability, because it controls for individual differences by analysing change within each pair.

What assumptions does the paired samples t-test require?

1. The differences (d = X₂ − X₁) must be approximately normally distributed — not the raw scores. 2. Independence of pairs — each pair's difference must not influence another pair's difference. 3. Continuous DV — the variable must be on an interval or ratio scale. 4. Exact one-to-one pairing — each subject provides exactly one observation at each time point. For n ≥ 30 pairs, the CLT makes the test robust to non-normal differences.

What does Cohen's d mean for the paired t-test?

Cohen's d = d̄ / s_d. It measures how many standard deviations of the difference scores the mean change represents. Benchmarks: small = 0.2, medium = 0.5, large = 0.8. Note: the paired Cohen's d uses the SD of the difference scores (s_d), not the pooled SD of the raw scores — so it can be larger than the equivalent independent-samples d for the same data.

How is the paired t-test related to the one-sample t-test?

They are mathematically identical when applied correctly. The paired t-test computes difference scores (d = X₂ − X₁) and then runs a one-sample t-test on those differences against a null value of zero (H₀: μ_d = 0). The distinction is conceptual: the paired test explicitly acknowledges the repeated-measures structure of the data.

Why is the paired t-test more powerful than the independent t-test?

Power comes from a small standard error. In the independent test, SE includes between-subject variability (people differ from each other). In the paired test, between-subject variability is removed — you only measure within-subject change. The variance of the differences is Var(X₂) + Var(X₁) − 2·Cov(X₁,X₂). When the two measurements are positively correlated (which is typical in repeated measures), this covariance term reduces s_d, shrinks SE, and increases power.

One-tailed or two-tailed — which should I choose?

Choose two-tailed unless you had a strong, pre-specified directional hypothesis before data collection. Two-tailed tests whether the change is non-zero in either direction. One-tailed tests a specific direction (e.g., scores improve). Switching to one-tailed after seeing the data to achieve significance is p-hacking and inflates Type I error. When in doubt, report two-tailed.

How do I report paired t-test results in APA 7th edition format?

Format: "A paired-samples t-test indicated that [Time 2 label] (M = ___, SD = ___) was [significantly/not significantly] different from [Time 1 label] (M = ___, SD = ___), t(df) = ___, p [</=] ___, d = ___. A 95% CI for the mean difference ranged from ___ to ___." Rules: italicise t, p, M, SD, d; report p to 3 decimal places; write p < .001 not p = .000; always report effect size and CI.

What if my result is non-significant?

A non-significant result (p ≥ α) means the data do not provide sufficient evidence to conclude a real change occurred. Check: (1) statistical power — was the study adequately sized? (2) effect size — is Cohen's d small? (3) variability — are difference scores highly variable? Report the observed d and CI regardless. A non-significant result does not prove no change occurred; it only fails to detect one with the current data.

When should I use the Wilcoxon Signed-Rank test instead?

Use the Wilcoxon Signed-Rank test when: (1) your difference scores are clearly non-normally distributed, especially with n < 30 pairs; (2) there are extreme outliers among the differences that would distort the mean; (3) the data are on an ordinal scale rather than interval/ratio. The Wilcoxon test is less powerful than the paired t-test when normality holds, but is more reliable when it does not.

The following references support the statistical methods used in this paired samples t-test calculator, covering effect size interpretation, p-value reporting, and best practices in repeated-measures hypothesis testing.

- Student [Gosset, W. S.]. (1908). The probable error of a mean. Biometrika, 6(1), 1–25. https://doi.org/10.1093/biomet/6.1.1

- Cohen, J. (1988). Statistical power analysis for the behavioral sciences (2nd ed.). Lawrence Erlbaum Associates.

- American Psychological Association. (2020). Publication manual of the American Psychological Association (7th ed.). https://doi.org/10.1037/0000165-000

- Field, A. (2018). Discovering statistics using IBM SPSS statistics (5th ed.). SAGE Publications.

- Gravetter, F. J., & Wallnau, L. B. (2021). Statistics for the behavioral sciences (10th ed.). Cengage Learning.

- Cumming, G. (2014). The new statistics: Why and how. Psychological Science, 25(1), 7–29. https://doi.org/10.1177/0956797613504966

- Lakens, D. (2013). Calculating and reporting effect sizes to facilitate cumulative science. Frontiers in Psychology, 4, 863. https://doi.org/10.3389/fpsyg.2013.00863

- Sullivan, G. M., & Feinn, R. (2012). Using effect size — or why the P value is not enough. Journal of Graduate Medical Education, 4(3), 279–282. https://doi.org/10.4300/JGME-D-12-00156.1

- Wasserstein, R. L., & Lazar, N. A. (2016). The ASA statement on p-values. The American Statistician, 70(2), 129–133. https://doi.org/10.1080/00031305.2016.1154108

- Wilcoxon, F. (1945). Individual comparisons by ranking methods. Biometrics Bulletin, 1(6), 80–83. https://doi.org/10.2307/3001968

- Maxwell, S. E., & Delaney, H. D. (2004). Designing experiments and analyzing data (2nd ed.). Lawrence Erlbaum Associates.

- R Core Team. (2024). R: A language and environment for statistical computing. https://www.R-project.org/

- Virtanen, P., et al. (2020). SciPy 1.0. Nature Methods, 17, 261–272. https://doi.org/10.1038/s41592-019-0686-2

- NIST/SEMATECH. (2013). e-Handbook of statistical methods. https://www.itl.nist.gov/div898/handbook/