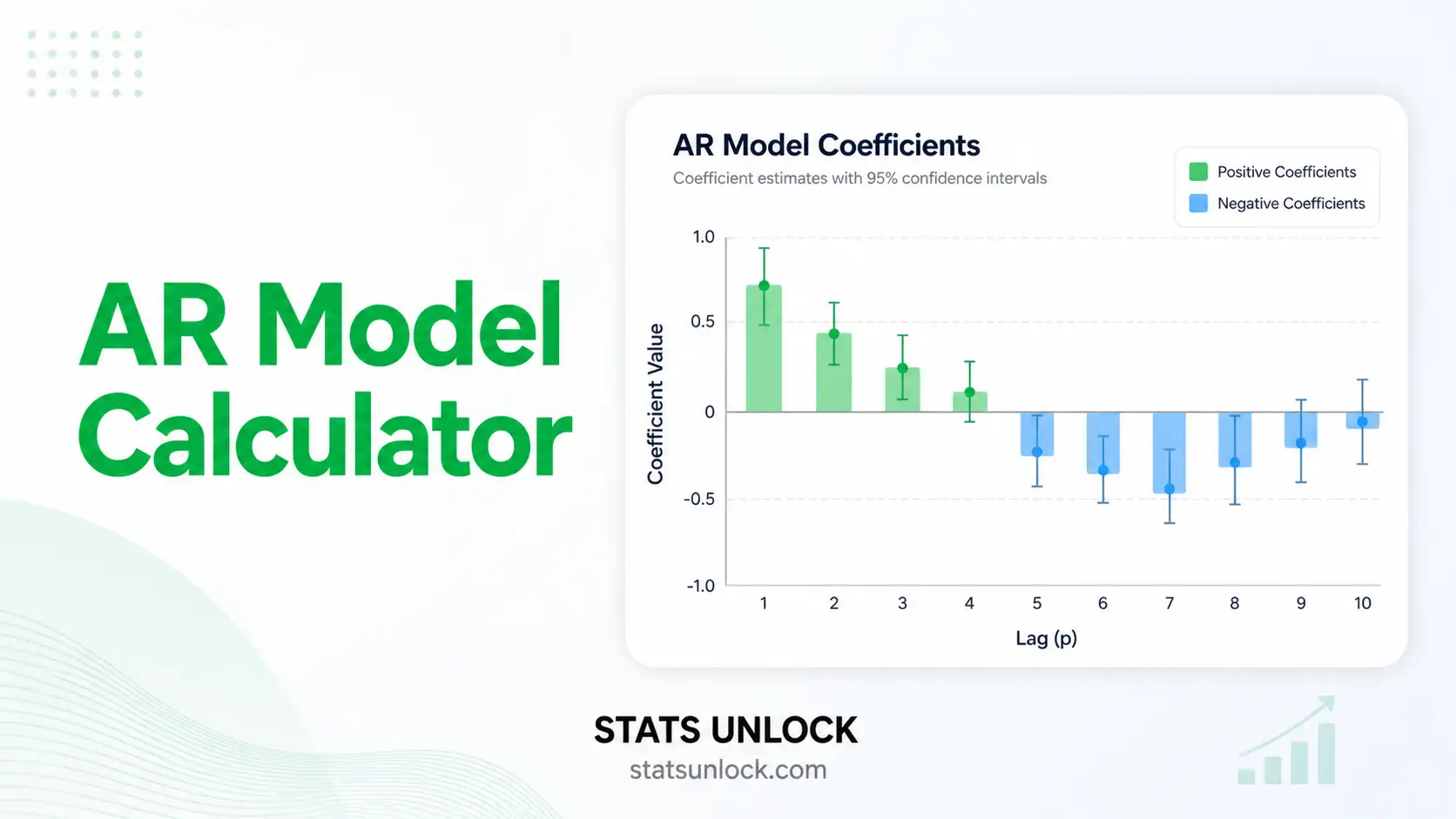

AR Model Calculator — Autoregressive Time Series Analysis

Fit an autoregressive model AR(p), forecast future values, examine ACF/PACF, run Ljung-Box residual diagnostics, and produce publication-ready output — all in your browser, free.

📥 Step 1 — Enter Your Time Series Data

52, 48, 55, 61, 47, ...). Each cluster is fitted as its own AR model. Cluster names are editable.

⚙️ Step 2 — Configure Your AR Model

📐 Technical Notes & Formulas

A. AR(p) Model Equation

B. OLS Estimation (Conditional)

C. Yule-Walker Estimation

D. Information Criteria

E. Ljung-Box Q Statistic

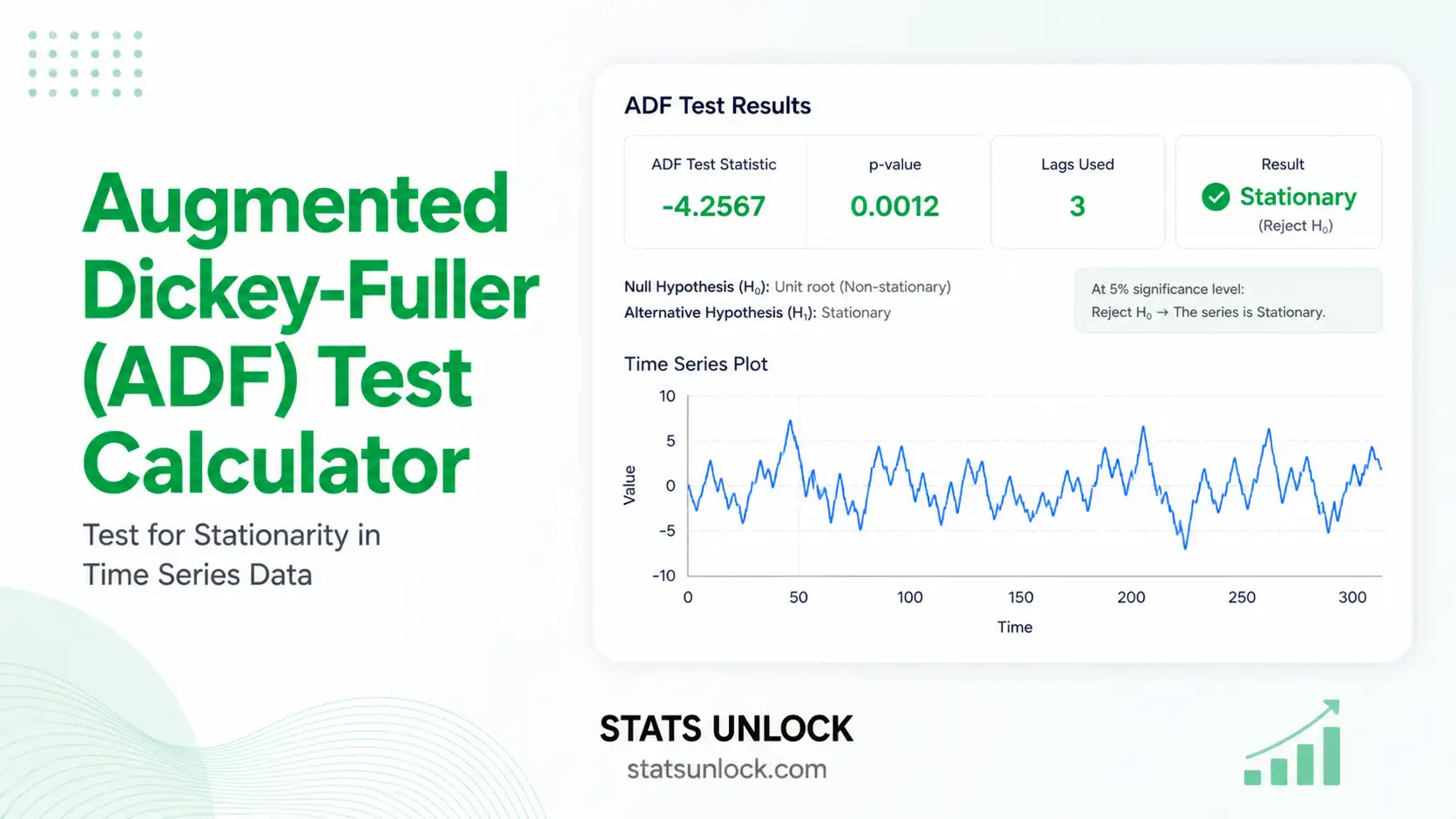

F. Stationarity Check

G. h-Step Forecast

📚 When to Use the AR Model

Decision Checklist

✅ Your data is a single time-ordered numeric series ✅ Observations are roughly equally spaced in time ✅ The series is (or can be made) stationary ✅ The PACF cuts off after some lag p; ACF tails off ✅ You want to forecast or describe persistence ❌ Don't use if the series has strong seasonality alone → use SARIMA ❌ Don't use if residuals have moving-average behaviour → use ARMA(p,q) ❌ Don't use if the series is non-stationary even after differencing → use cointegration / state-space ❌ Don't use if observations are independent (no temporal structure) → use OLS / GLM

Real-World Examples

- Ecology & Wildlife — modelling persistence in monthly camera-trap counts of a species across a reserve to detect declining trends or stable populations.

- Economics — short-term forecasts of inflation, exchange rates, or commodity prices where past values strongly predict the next value.

- Climate science — fitting AR(1)/AR(2) to temperature anomalies, river discharge, or atmospheric CO₂ residuals.

- Public health — weekly counts of disease cases with autocorrelation but no clear seasonal cycle yet (early-stage outbreak modelling).

- Engineering & signal processing — vibration, audio, or sensor signals where current readings depend on recent history.

Sample Size Guidance

- Minimum: n = 50 for AR(1)–AR(2)

- Recommended: n ≥ 100 for AR(3)–AR(5)

- For AR(p) with p > 5, aim for n ≥ 200

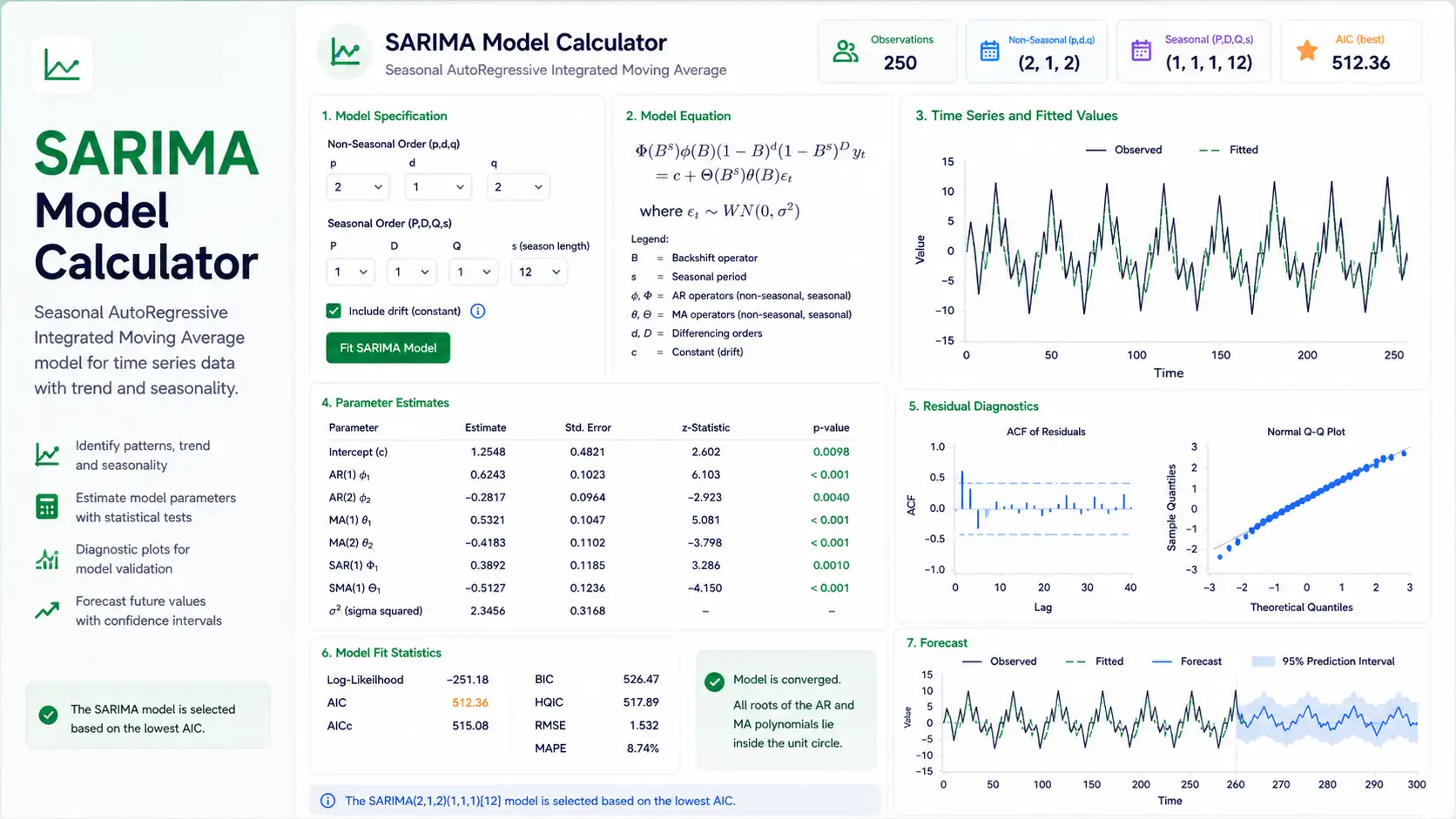

Decision Tree — Time Series Family

Time series →

Stationary?

Yes →

PACF cut-off, ACF tails off → AR(p) ← THIS TOOL

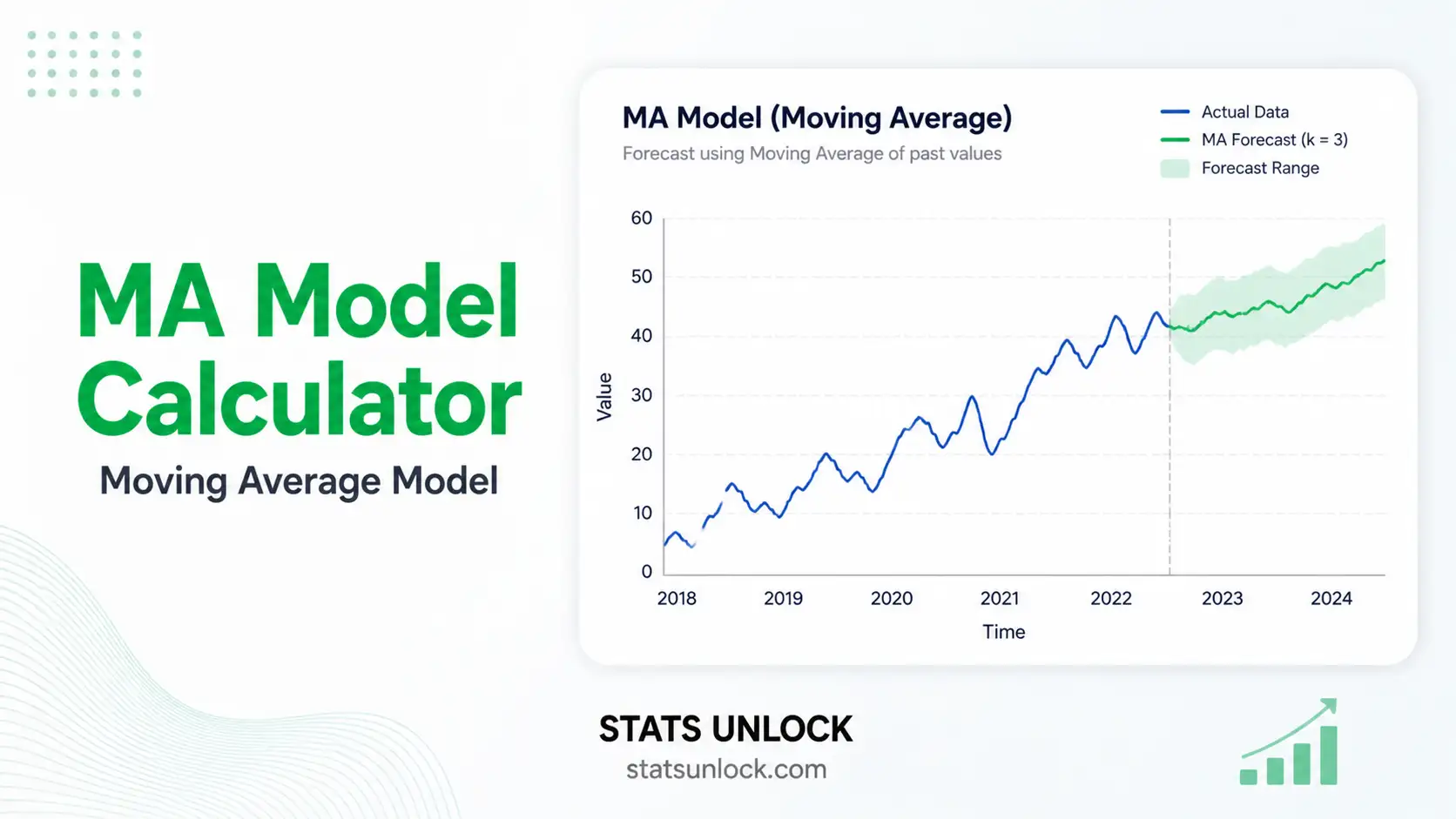

ACF cut-off, PACF tails off → MA(q)

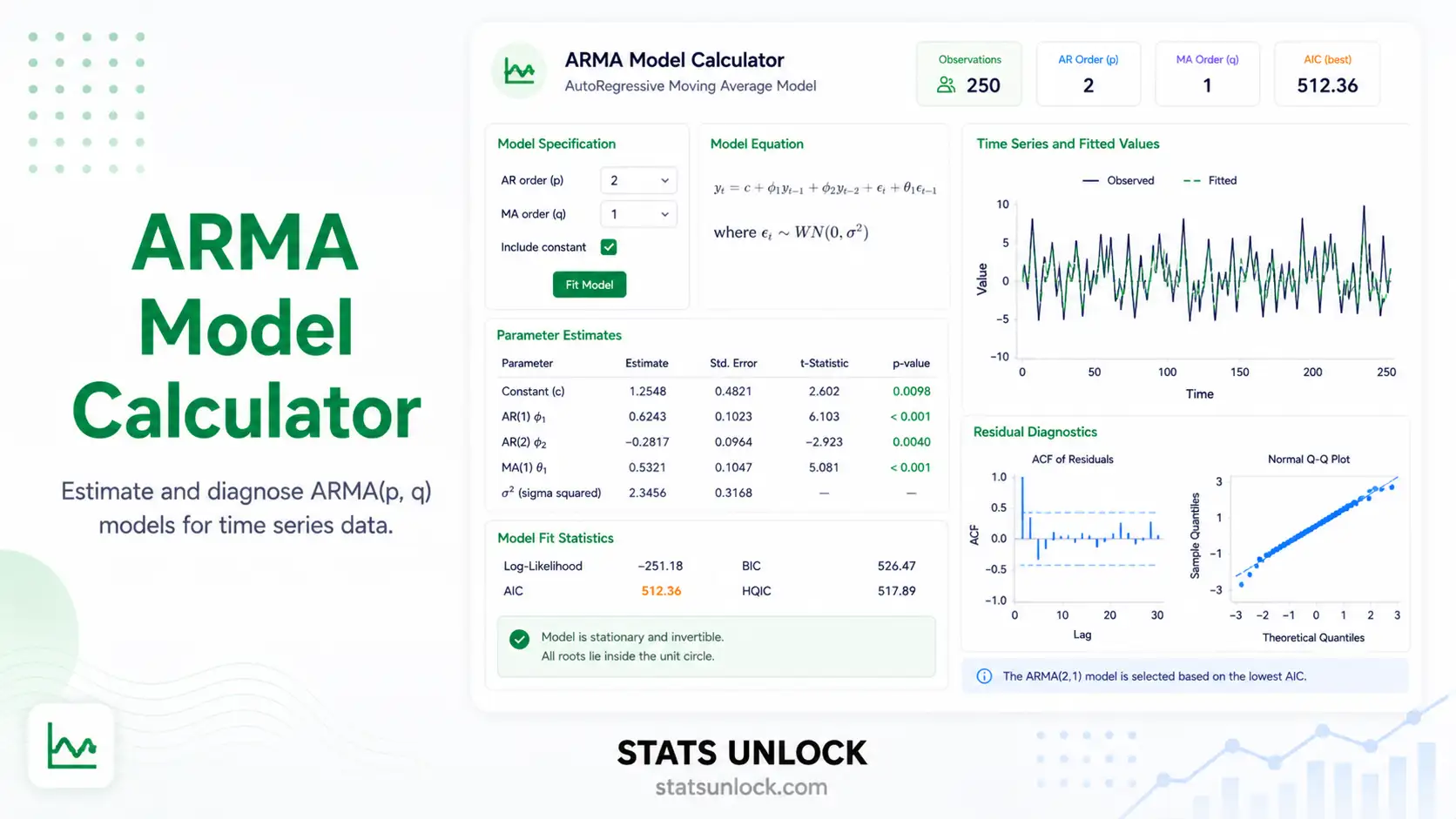

Both tail off → ARMA(p, q)

No →

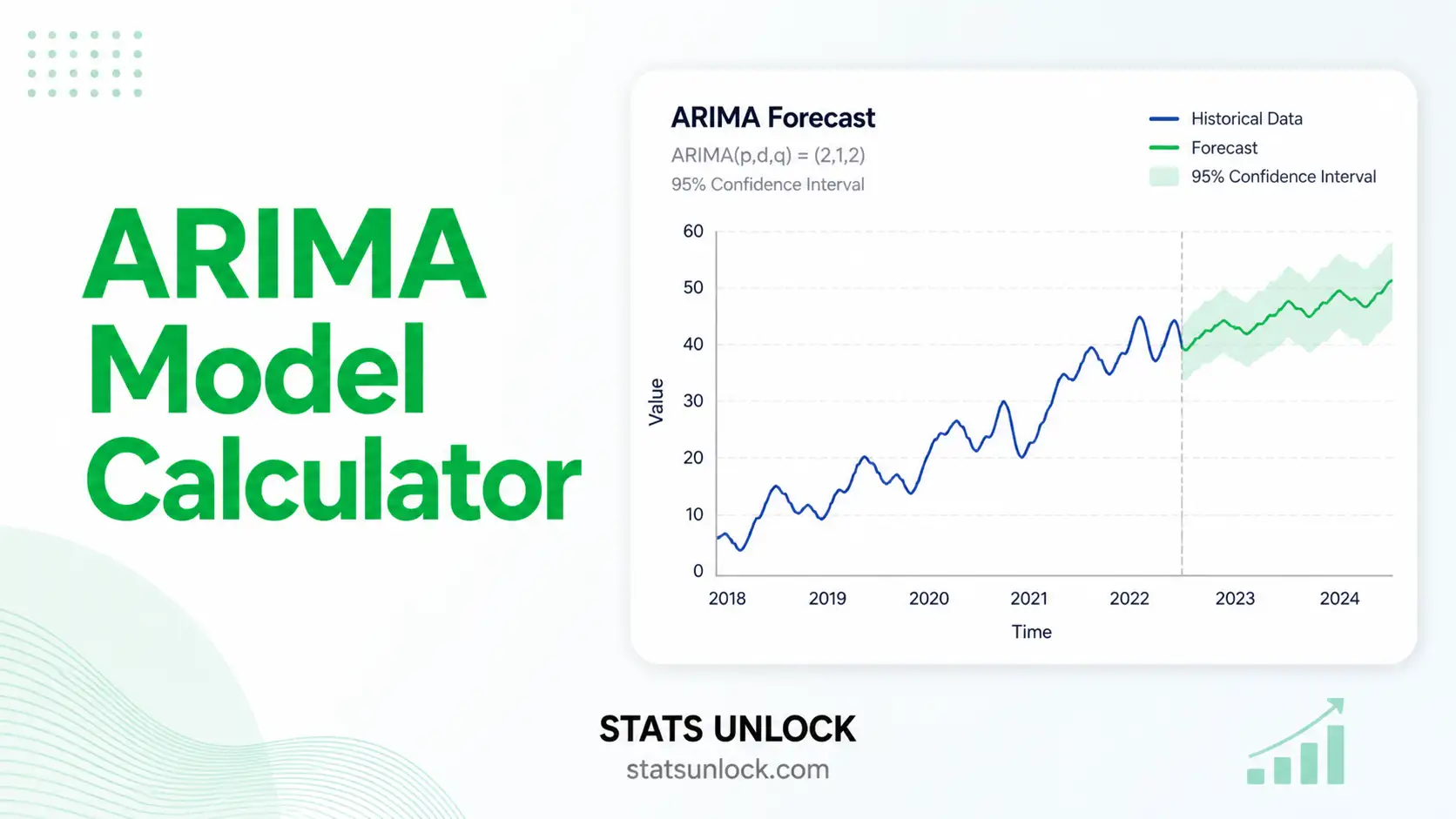

Trend only → ARIMA(p, d, q) (after differencing)

Trend + seasonality → SARIMA / ETS

Multiple related series → VAR / VECM

📖 How to Use This Tool — Step-by-Step Guide

- Step 1 — Enter your data. Paste comma-separated values into the cluster textarea, e.g.

52, 48, 55, 61, 47, 53, 59, 60, 56, 62. Or pick a sample dataset from the dropdown. - Step 2 — Pick a sample dataset. Five built-in series are provided: synthetic AR(1) and AR(2), retail sales, temperature anomalies, and camera-trap counts. Sample 1 is pre-loaded.

- Step 3 — Configure the model. Choose AR order (or "Auto" to let AIC pick). Pick estimation method (OLS or Yule-Walker). Set forecast horizon and α.

- Step 4 — Run the analysis. Click the green button. Computation runs in your browser; nothing is uploaded.

- Step 5 — Read the summary cards. Each card shows one key value — the chosen order, AIC, BIC, residual SE, and the Ljung-Box p-value.

- Step 6 — Read the full results table. Coefficient estimates, SEs, t-values, and p-values are listed with a row per φ_k.

- Step 7 — Examine the four colorful plots. Series + forecast, ACF, PACF, and residuals — each tells a different story about model fit.

- Step 8 — Check assumptions. Stationarity, residual independence (Ljung-Box), residual normality, and constant variance.

- Step 9 — Read the detailed interpretation. Auto-generated paragraphs explain what each value means in plain language.

- Step 10 — Export your results. Use Download Doc (.txt) for a Word-ready report, or Download PDF for a print-ready document.

Worked example — temperature anomalies

Sample 4 contains 80 monthly temperature anomaly values. Loading it and clicking "Run" with default settings yields an AR(1) model with φ̂₁ ≈ 0.62, AIC near 165, and a Ljung-Box p of about 0.74 — meaning residuals look like white noise and the AR(1) fit is adequate.

❓ Frequently Asked Questions

Q1. What is an AR (autoregressive) model and when should I use it?

An autoregressive model writes the current value of a time series as a linear function of its previous p values plus white-noise error. Use it when your series is stationary, shows persistence (today depends on recent history), and the partial autocorrelation function (PACF) cuts off at some finite lag p while the ACF decays gradually.

Typical use cases: short-horizon forecasting in economics, ecology, climate, and engineering when there is no strong seasonality and no moving-average component.

Q2. What is a p-value, and how do I interpret it for the AR model?

For each AR coefficient φ_k, the p-value is the probability of seeing a coefficient at least as far from zero as the one estimated, if the true coefficient were zero. A small p-value (< α) means lag k contributes meaningfully to predicting x_t.

For the Ljung-Box residual test, the p-value tells you whether residuals look like white noise. A LARGE p-value here is GOOD — it means the model captured the autocorrelation structure.

Q3. What does statistical significance mean for AR coefficients — and does it equal practical importance?

A coefficient with p < 0.05 is statistically distinguishable from zero, but its magnitude tells you whether it matters in practice. φ̂ = 0.85 with p < 0.001 indicates very strong persistence. φ̂ = 0.05 with p = 0.04 is statistically significant but contributes very little to forecasts.

Q4. What is the "effect size" for an AR model, and how do I interpret it?

The natural effect size is the AR coefficient itself (φ̂_k) — it is the change in expected x_t per unit change in x_{t-k}. A second effect-size measure is the R² of the OLS fit: how much of the variance in the series the AR model explains. R² > 0.5 is moderate, > 0.7 is strong.

Q5. What assumptions does the AR model require, and what if my data violate them?

The AR model requires: (1) stationarity, (2) residuals are uncorrelated white noise, (3) residuals are approximately normal for inference, (4) residual variance is constant (homoscedasticity).

If non-stationary → difference the series and use ARIMA. If residuals are autocorrelated → increase p or use ARMA. If variance changes over time → use GARCH. If residuals are skewed → consider a Box-Cox transform.

Q6. How large a sample do I need for the AR model to be reliable?

Practical minimums: n ≥ 50 for AR(1)/AR(2); n ≥ 100 for AR(3)–AR(5); n ≥ 200 for higher-order or seasonal extensions. Coefficient estimates are biased downward (toward zero) in small samples.

Q7. What is the difference between OLS and Yule-Walker estimation?

OLS regresses x_t on its lags directly and gives the same answer as maximum likelihood for stationary AR. Yule-Walker uses the relationship between AR coefficients and the autocorrelation function. For long, stationary series the two methods agree; OLS is slightly more efficient and gives standard errors directly. Yule-Walker is more robust when the series is exactly stationary but small.

Q8. How do I report AR model results in APA 7th edition format?

Report the order, each coefficient with its standard error, an information criterion, and a residual diagnostic: "An AR(2) model was fitted, φ̂₁ = 0.65 (SE = 0.09), φ̂₂ = -0.21 (SE = 0.09); AIC = 142.3; Ljung-Box Q(10) = 8.42, p = .587, indicating residuals consistent with white noise."

See the "How to Write Your Results" section for five copyable templates auto-filled with your numbers.

Q9. Can I use this calculator for my published research or university assignment?

This calculator is designed for educational use, exploratory analysis, and learning. For peer-reviewed publication, verify your numbers using R (stats::ar, forecast::Arima) or Python (statsmodels.tsa.ar_model.AutoReg). Cite this tool as: STATS UNLOCK. (2025). AR model calculator. Retrieved from https://statsunlock.com.

Q10. What should I do if my Ljung-Box test rejects white-noise residuals?

Rejection means the AR(p) you fit did not capture all the autocorrelation. First, increase p by 1 or 2 and re-fit. If that still fails, move to ARMA(p, q) by adding a moving-average term. If the series shows seasonality you missed, switch to SARIMA. Always re-run the Ljung-Box test on the new residuals.

📚 References

The following references support the statistical methods used in this AR model tool, covering autoregressive time series analysis, p-value interpretation, and best practices in hypothesis testing and forecasting.

- Yule, G. U. (1927). On a method of investigating periodicities in disturbed series, with special reference to Wolfer's sunspot numbers. Philosophical Transactions of the Royal Society A, 226, 267–298. https://doi.org/10.1098/rsta.1927.0007

- Walker, G. (1931). On periodicity in series of related terms. Proceedings of the Royal Society A, 131, 518–532. https://doi.org/10.1098/rspa.1931.0069

- Box, G. E. P., Jenkins, G. M., Reinsel, G. C., & Ljung, G. M. (2015). Time Series Analysis: Forecasting and Control (5th ed.). Wiley. Wiley

- Brockwell, P. J., & Davis, R. A. (2016). Introduction to Time Series and Forecasting (3rd ed.). Springer. https://doi.org/10.1007/978-3-319-29854-2

- Hamilton, J. D. (1994). Time Series Analysis. Princeton University Press. Princeton University Press

- Hyndman, R. J., & Athanasopoulos, G. (2021). Forecasting: Principles and Practice (3rd ed.). OTexts. https://otexts.com/fpp3/

- Akaike, H. (1974). A new look at the statistical model identification. IEEE Transactions on Automatic Control, 19(6), 716–723. https://doi.org/10.1109/TAC.1974.1100705

- Schwarz, G. (1978). Estimating the dimension of a model. The Annals of Statistics, 6(2), 461–464. https://doi.org/10.1214/aos/1176344136

- Ljung, G. M., & Box, G. E. P. (1978). On a measure of lack of fit in time series models. Biometrika, 65(2), 297–303. https://doi.org/10.1093/biomet/65.2.297

- Said, S. E., & Dickey, D. A. (1984). Testing for unit roots in autoregressive-moving average models of unknown order. Biometrika, 71(3), 599–607. https://doi.org/10.1093/biomet/71.3.599

- Shumway, R. H., & Stoffer, D. S. (2017). Time Series Analysis and Its Applications (4th ed.). Springer. https://doi.org/10.1007/978-3-319-52452-8

- Cryer, J. D., & Chan, K.-S. (2008). Time Series Analysis: With Applications in R (2nd ed.). Springer. https://doi.org/10.1007/978-0-387-75959-3

- R Core Team. (2024). R: A language and environment for statistical computing. R Foundation for Statistical Computing. https://www.R-project.org/

- Seabold, S., & Perktold, J. (2010). statsmodels: Econometric and statistical modeling with Python. Proceedings of the 9th Python in Science Conference. https://www.statsmodels.org/

- NIST/SEMATECH. (2013). e-Handbook of Statistical Methods — Time Series. National Institute of Standards and Technology. https://www.itl.nist.gov/div898/handbook/