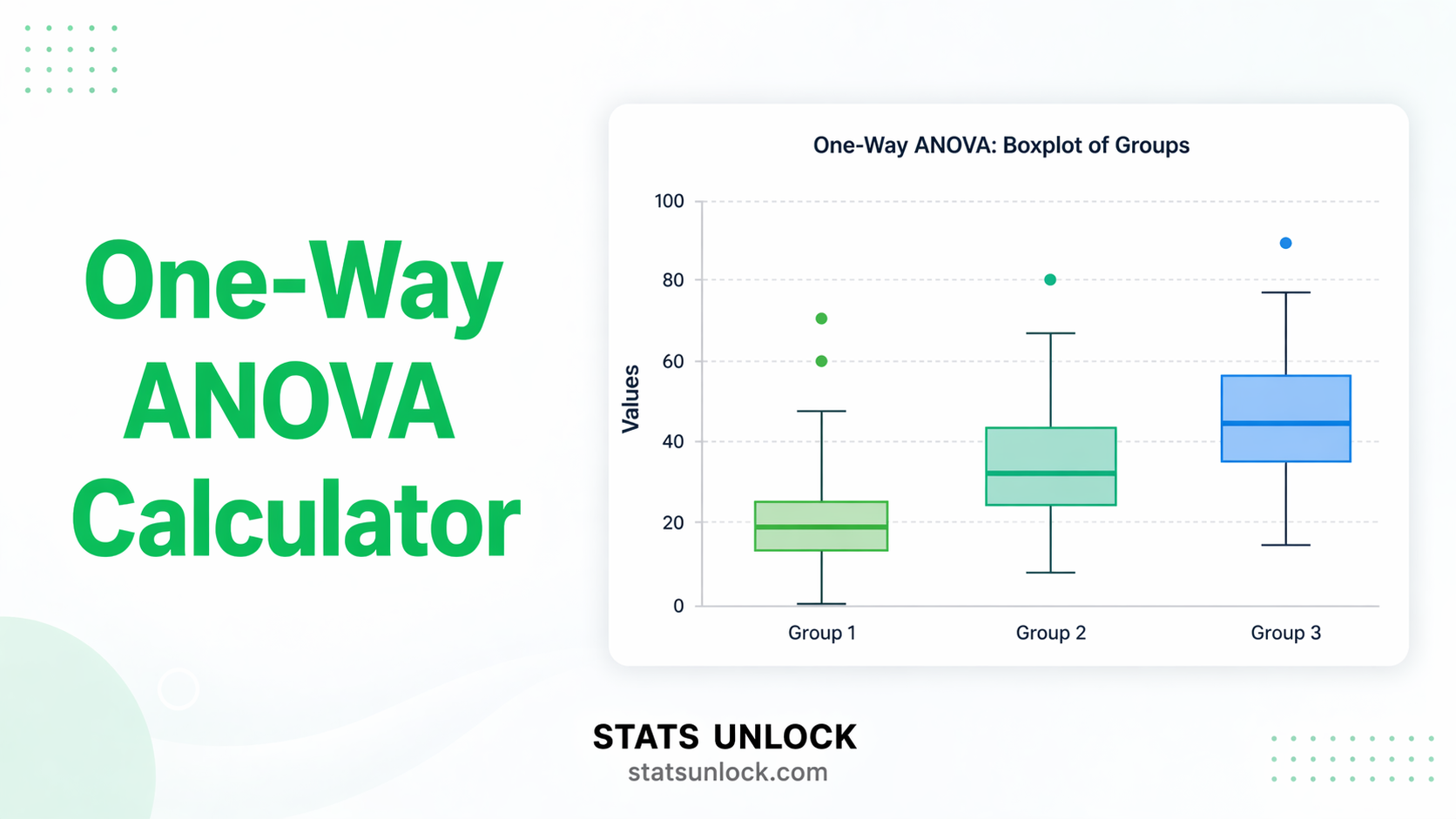

One-Way ANOVA Calculator

Compare means across three or more independent groups. Get F-statistic, p-value, η² effect size, Tukey HSD post-hoc comparisons, and five APA-ready reporting templates — free, no software needed.

One-Way ANOVA Formulas

Tukey HSD Formula

Levene's Test for Homogeneity of Variance

Technical Notes

- η² (eta-squared): Overestimates population effect size, especially with small samples. Use ω² (omega-squared) for a less biased estimate.

- ω² (omega-squared): Less biased than η², can be negative for very small non-significant effects — report as 0 in that case.

- Post-hoc timing: Tukey HSD should only be run when the omnibus F-test is significant. Running post-hoc without a significant F inflates Type I error.

- Bonferroni correction: More conservative than Tukey — divides α by the number of comparisons. Preferred when comparisons are planned and few.

- Welch's ANOVA: Recommended when Levene's test is significant (p < .05). It does not assume equal variances and uses Brown-Forsythe corrected df.

- Kruskal-Wallis test: The non-parametric alternative to one-way ANOVA. Use when normality is severely violated and groups are small (<30 each).

One-way ANOVA answers: "Do any of these groups have different population means?" It tests multiple groups simultaneously, controlling the Type I error rate in a way that repeated t-tests cannot.

Decision Checklist

- ✅You have three or more independent groups

- ✅Your dependent variable is continuous (interval or ratio scale)

- ✅Observations within and between groups are independent

- ✅Data within each group are approximately normally distributed, or n ≥ 30 per group

- ✅Group variances are approximately equal (check Levene's test)

- ❌Do NOT use for only two groups → use Independent Samples t-Test

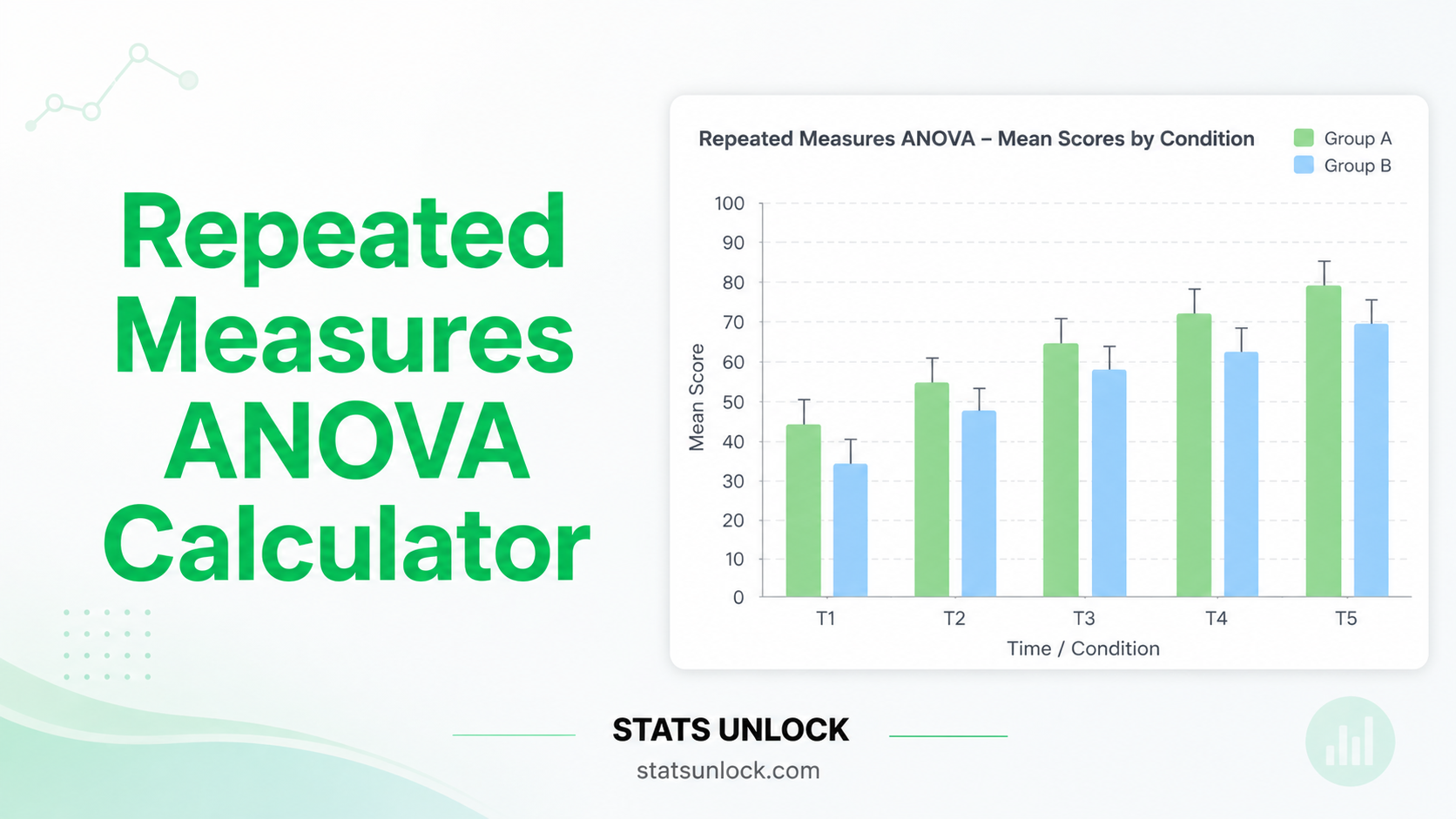

- ❌Do NOT use if the same participants appear in all groups → use Repeated-Measures ANOVA

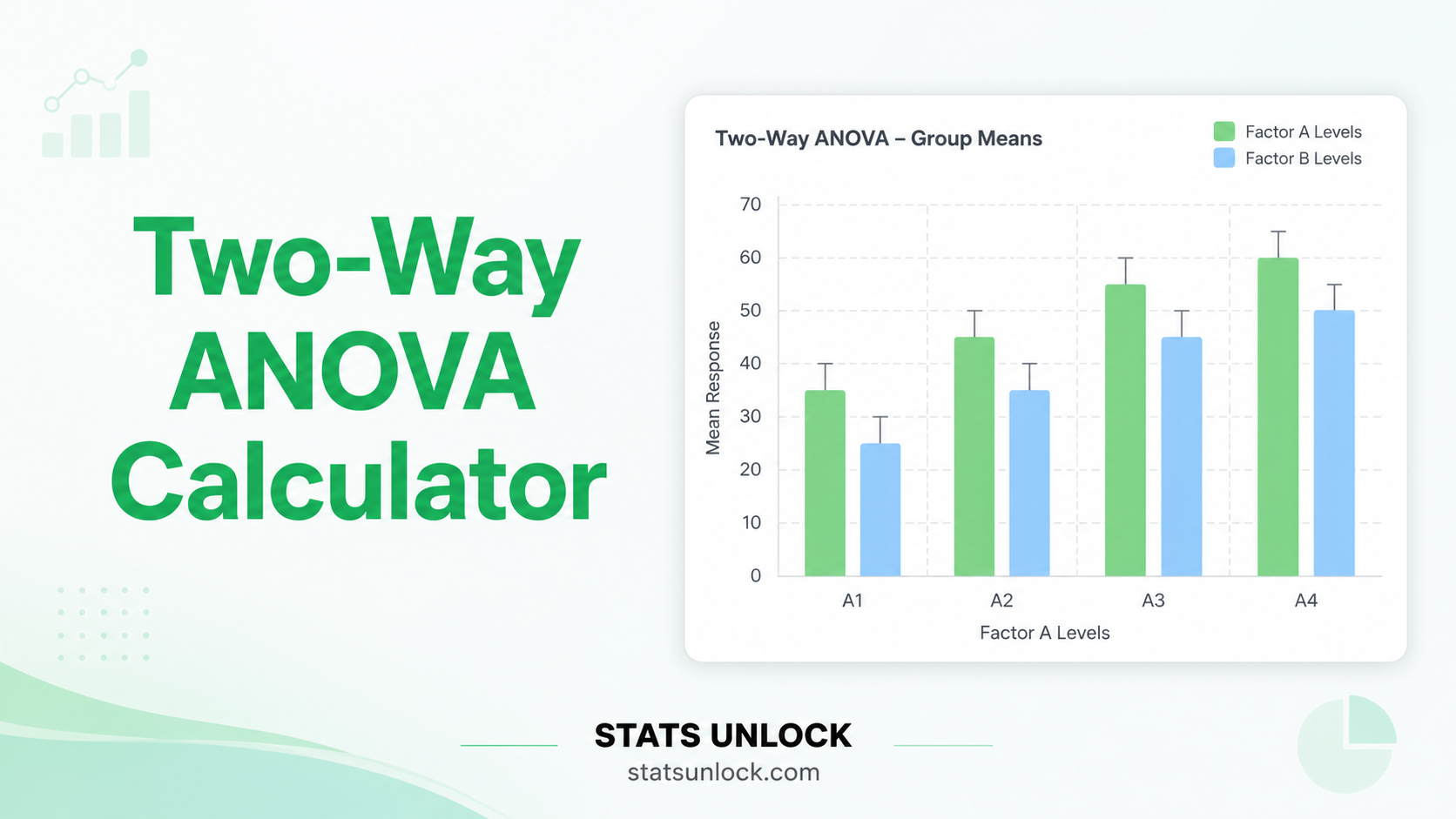

- ❌Do NOT use if you have two independent variables → use Two-Way ANOVA

- ❌Do NOT use if normality is severely violated with small groups → use Kruskal-Wallis Test

Real-World Examples

Comparing mean exam scores across four teaching methods (traditional lecture, flipped classroom, problem-based learning, online) to determine which is most effective.

Comparing plant growth (cm) under five fertiliser conditions to determine whether fertiliser type significantly affects yield.

Comparing post-treatment anxiety scores across three therapy types (CBT, medication, combination) to evaluate relative treatment effectiveness.

Comparing mean sprint times across four training programmes to identify which programme produces the fastest athletes.

Related Tests — Decision Tree

What is a one-way ANOVA and when should I use it?

One-way ANOVA tests whether the means of three or more independent groups differ significantly. It uses the F-statistic (ratio of between-group to within-group variance) to determine whether observed group differences are larger than expected by chance. Use it when you have one categorical grouping variable and one continuous outcome measured in separate, independent groups.

Why use ANOVA instead of multiple t-tests?

Running multiple pairwise t-tests inflates the familywise Type I error rate. For 3 groups (3 comparisons), the true error rate rises to approximately 14.3% instead of 5%. For 5 groups (10 comparisons), it reaches ~40%. ANOVA tests all groups simultaneously at the specified α level, maintaining the overall error rate. If ANOVA is significant, post-hoc tests identify the specific pairs that differ.

What is the F-statistic and how do I interpret it?

The F-statistic = MS_between / MS_within. MS_between measures how much the group means vary around the grand mean; MS_within measures how much individual observations vary within their group. An F close to 1 suggests the between-group variation is no greater than random within-group variation. Large F values (p < α) indicate that at least one group mean differs significantly from the others.

What is eta-squared (η²) and how do I interpret it?

Eta-squared (η²) = SS_between / SS_total. It represents the proportion of total variance accounted for by the group factor. Cohen's (1988) benchmarks: small = 0.01, medium = 0.06, large = 0.14. Omega-squared (ω²) is a less biased estimate of the population effect size, especially valuable with small samples. This calculator reports both. For publication, report ω² alongside η².

What assumptions does one-way ANOVA require?

1. Independence: Observations must be independent within and across groups. 2. Normality: Each group's data should be approximately normally distributed, or n ≥ 30 per group (CLT). 3. Homogeneity of variances: All groups should have similar population variances — check with Levene's test. If violated (p < .05), use Welch's ANOVA. If normality is severely violated with small samples, use Kruskal-Wallis.

What is Tukey HSD and when is it used?

Tukey's Honestly Significant Difference (HSD) compares all possible pairs of group means following a significant ANOVA. It controls the familywise error rate at α, making it more conservative than raw t-tests but less conservative than Bonferroni. It is the most widely recommended post-hoc test when group sizes are equal or similar. Never run post-hoc tests if the omnibus F is non-significant.

What is the difference between Tukey HSD and Bonferroni correction?

Tukey HSD uses the Studentized Range distribution and is optimised for all pairwise comparisons. Bonferroni divides α by the number of comparisons (adjusted α = α/m), making it more conservative when there are many comparisons. For a small number of pairwise comparisons (3–6), Tukey HSD is generally preferred for its better statistical power. For planned (a priori) comparisons, Bonferroni is often more appropriate.

How do I report one-way ANOVA results in APA 7th edition format?

ANOVA: "A one-way ANOVA revealed a [significant/non-significant] effect of [IV] on [DV], F(df_between, df_within) = ___, p [</=] ___, η² = ___, ω² = ___." Post-hoc: "Tukey HSD post-hoc comparisons indicated that [Group A] (M = ___, SD = ___) scored significantly [higher/lower] than [Group B] (M = ___, SD = ___), p = ___, d = ___." Run the analysis above for five auto-filled reporting templates.

My ANOVA is significant — what do I do next?

A significant F only tells you at least one group mean differs — not which ones. Follow up with post-hoc tests (Tukey HSD is default here) to identify specific pairwise differences. Report: (1) the omnibus F-test result; (2) effect size (η² and ω²); (3) descriptive statistics for each group; (4) post-hoc results for all significant pairs; (5) a conclusion about which groups differ and in what direction.

What should I do if my ANOVA result is non-significant?

A non-significant F (p ≥ α) means the data do not provide sufficient evidence to conclude any group means differ. Do not run post-hoc tests. Report the F-value, p-value, and η² regardless — readers need this information. Consider checking statistical power and whether the study was adequately sized to detect the expected effect. A non-significant result does not prove the groups are equal.

The following references support the statistical methods used in this one-way ANOVA calculator, covering effect size interpretation, post-hoc testing, and best practices in analysis of variance.

- Fisher, R. A. (1925). Statistical methods for research workers. Oliver and Boyd.

- Tukey, J. W. (1949). Comparing individual means in the analysis of variance. Biometrics, 5(2), 99–114. https://doi.org/10.2307/3001913

- Cohen, J. (1988). Statistical power analysis for the behavioral sciences (2nd ed.). Lawrence Erlbaum Associates.

- American Psychological Association. (2020). Publication manual of the American Psychological Association (7th ed.). https://doi.org/10.1037/0000165-000

- Field, A. (2018). Discovering statistics using IBM SPSS statistics (5th ed.). SAGE Publications.

- Levene, H. (1960). Robust tests for equality of variances. In I. Olkin (Ed.), Contributions to probability and statistics (pp. 278–292). Stanford University Press.

- Lakens, D. (2013). Calculating and reporting effect sizes to facilitate cumulative science. Frontiers in Psychology, 4, 863. https://doi.org/10.3389/fpsyg.2013.00863

- Cumming, G. (2014). The new statistics: Why and how. Psychological Science, 25(1), 7–29. https://doi.org/10.1177/0956797613504966

- Maxwell, S. E., & Delaney, H. D. (2004). Designing experiments and analyzing data: A model comparison perspective (2nd ed.). Lawrence Erlbaum Associates.

- Wasserstein, R. L., & Lazar, N. A. (2016). The ASA statement on p-values. The American Statistician, 70(2), 129–133. https://doi.org/10.1080/00031305.2016.1154108

- R Core Team. (2024). R: A language and environment for statistical computing. https://www.R-project.org/

- NIST/SEMATECH. (2013). e-Handbook of statistical methods. https://www.itl.nist.gov/div898/handbook/